Promises: Using Promises To Evaluate Agent Reputation – Part 3

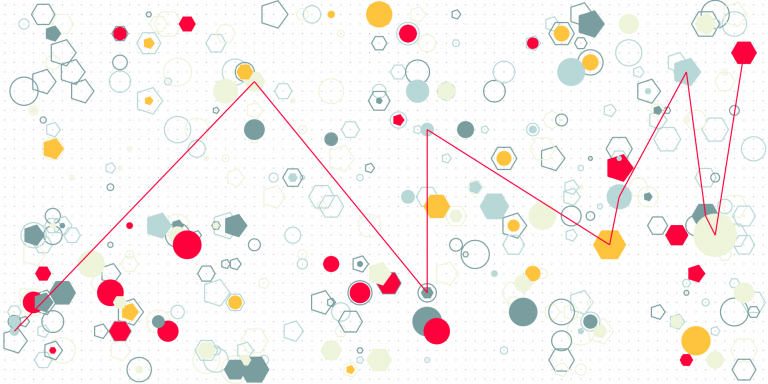

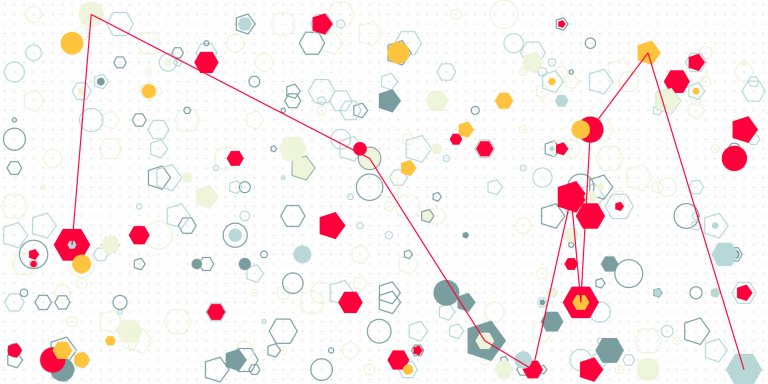

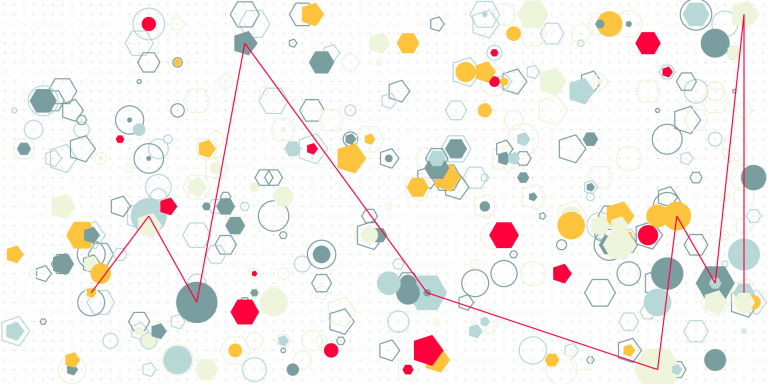

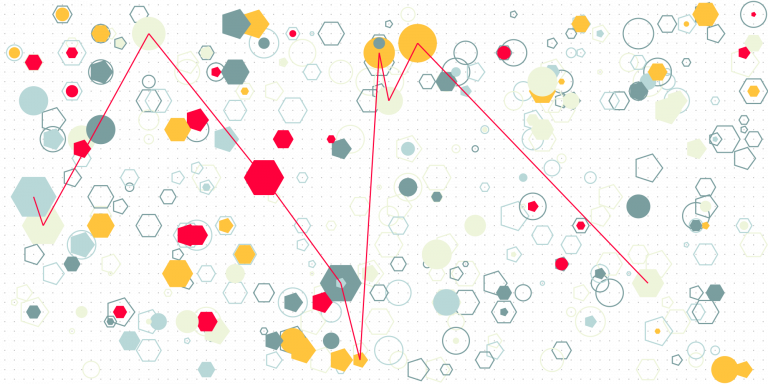

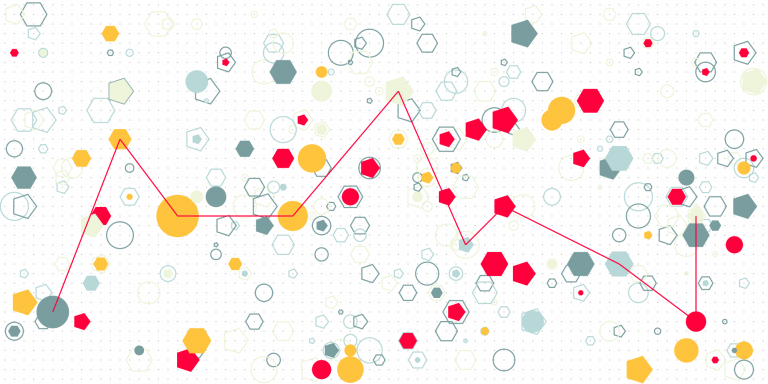

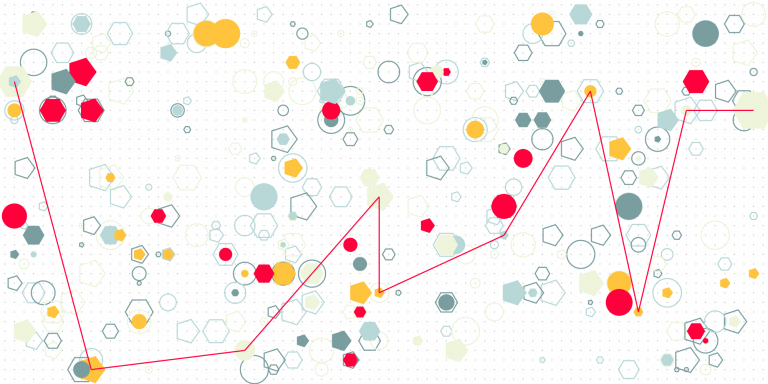

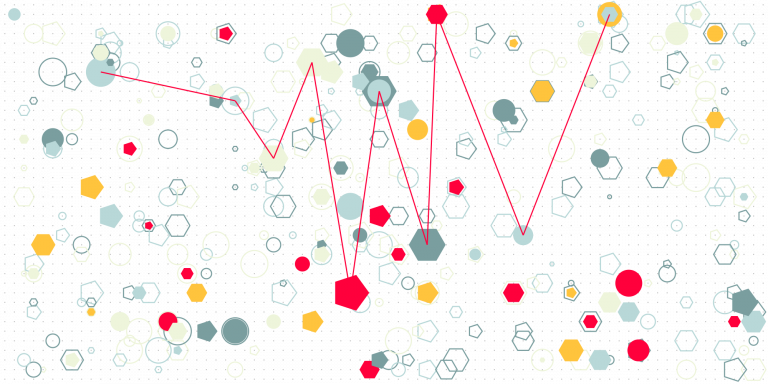

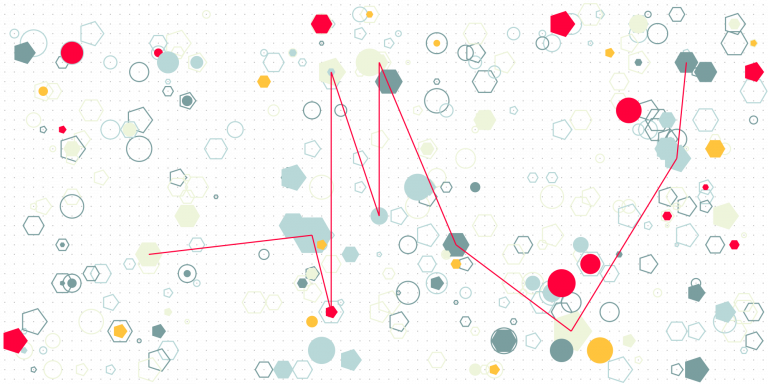

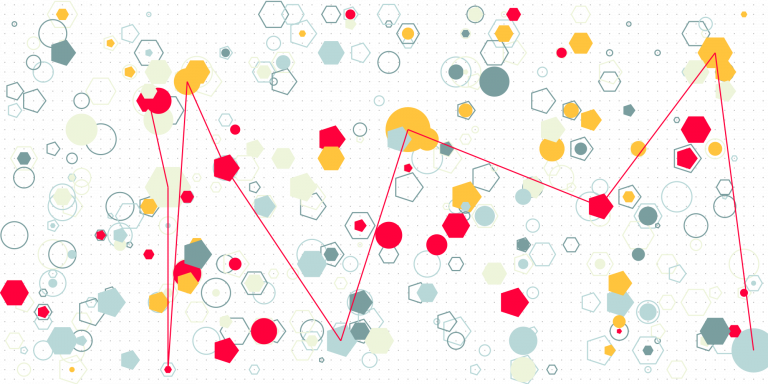

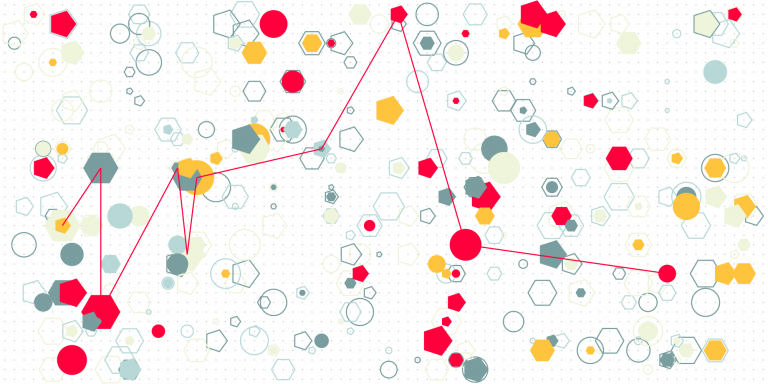

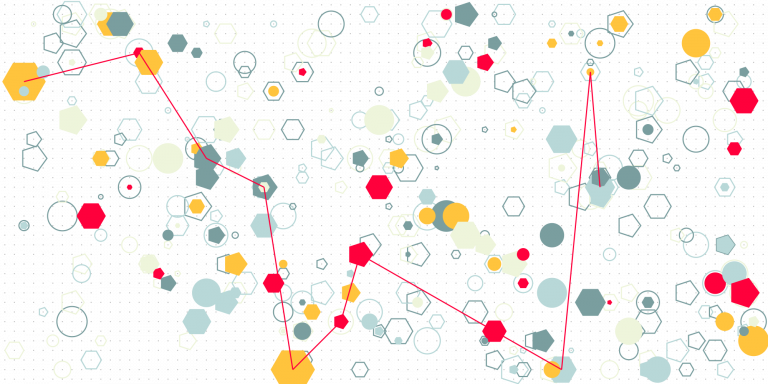

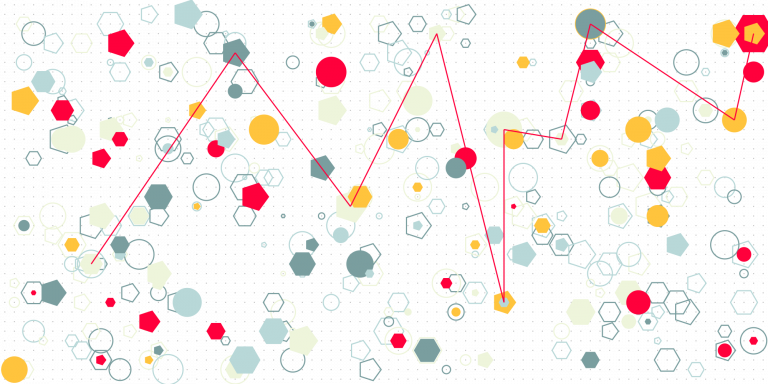

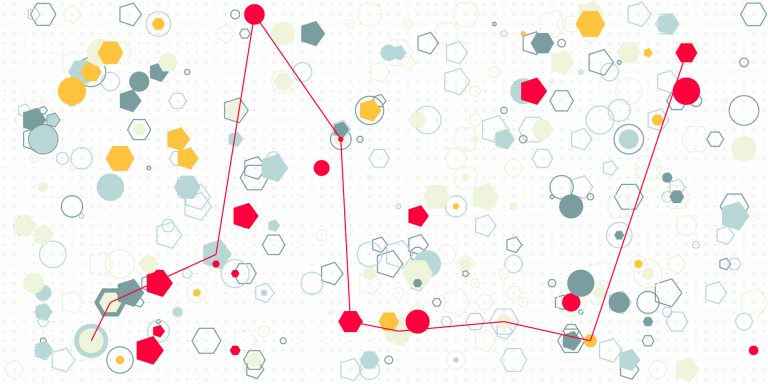

We explore what happens if the higher reputation increases an agent’s probability to fulfill its promises.

We explore what happens if the higher reputation increases an agent’s probability to fulfill its promises.

What if fulfilling a promise increases an agent’s reputation? The framework in this text captures this idea.

The text presents a framework in which reputation is a function of promises between agents. The framework can be used to create a reputation mechanism to use in decision processes, in multi agent systems, and so on.

We should reduce the cost of authorship and create an incentive mechanism that generates and assigns credibility to authors in a community.

I wrote in another note (here) that AI cannot decide autonomously because it does not have self-made preferences. I argued that its preferences are always a reflection of those that its designers wanted it to exhibit, or that reflect patterns in training data. The irony with this argument is that if an AI is making…

I use “depth of expertise” as a data quality dimension of AI training datasets. It describes how much a dataset reflects of expertise in a knowledge domain. This is not a common data quality dimension used in other contexts, and I haven’t seen it as such in discussions of, say, quality of data used for…

As currently drafted (2024), the Algorithmic Accountability Act does not require the algorithms and training data used in an AI System to be available for audit. (See my notes on the Act, starting with the one here.) The way that an auditor learns about the AI System is from documented impact assessments, which involve descriptions…

The short answer is “No”, and the reasons for it are interesting. An AI system is opaque if it is impossible or costly for it (or people auditing it) to explain why it gave some specific outputs. Opacity is undesirable in general – see my note here. So this question applies for both those outputs…

Should the explanations that an Artificial Intelligence system provides for its recommendations, or decisions, meet a higher standard than explanations for the same, that a human expert would be able to provide? I wrote separately, here, about conditions that good explanations need to satisfy. These conditions are very hard to satisfy, and in particular the…

How good of an explanation can be provided by Artificial Intelligence built using statistical learning methods? This note is slightly more complicated than my usual ones. In logic, conclusions are computed from premises by applying well defined rules. When a conclusion is the appropriate one, given the premises and the rules, then it is said…

There are, roughly speaking, three problems to solve for an Artificial Intelligence system to comply with AI regulations in China (see the note here) and likely future regulation in the USA (see the notes on the Algorithmic Accountability Act, starting here): Using available, large-scale crawled web/Internet data is a low-cost (it’s all relative) approach to…

If an artificial intelligence system is trained on large-scale crawled web/Internet data, can it comply with the Algorithmic Accountability Act? For the sake of discussion, I assume below that (1) the Act is passed, which it is not at the time of writing, and (2) the Act applies to the system (for more on applicability,…

Sections 6 through 11 of the Algorithmic Accountability Act (2022 and 2023) have less practical implications for product management. They ensure that the Act, if passed, becomes part of the Federal Trade Commission Act, as well as introduce requirements that the FTC needs to meet when implementing the Act. This text follows my notes on…

Section 5 specifies the content of the summary report to be submitted about an automated decision system. This text follows my notes on Sections 1 and 2, Section 3 and Section 4 of the Algorithmic Accountability Act (2022 and 2023). This is the fourth of a series of texts where I’m providing a critical reading…

Section 4 provides requirements that influence how to do the impact assessment of an automated decision system on consumers/users. This text follows my notes on Sections 1 and 2, and Section 3 of the Algorithmic Accountability Act (2022 and 2023). When (if?) the Act becomes law, it will apply across all kinds of software products,…

This text follows my notes on Sections 1 and 2 of the the Algorithmic Accountability Act (2022 and 2023). When (if?) the Act becomes law, it will apply across all kinds of software products, or more generally, products and services which rely in any way on algorithms to support decision making. This makes it necessary…

The Algorithmic Accountability Act (2022 and 2023) applies to many more settings than what is in early 2024 considered as Artificial Intelligence. It applies across all kinds of software products, or more generally, products and services which rely in any way on algorithms to support decision making. This makes it necessary for any product manager…

The Algorithmic Accountability Act of 2022, here, applies to systems that help make, or themselves make (or recommend) “critical decisions”. Determining if something is a “critical decision” determines if a system is subject to the Act or not. Hence the interest in the discussion, below, of the definition of “critical decision”. The Act defines a…

The Algorithmic Accountability Act of 2022, here, is a very interesting text if you need to design or govern a process for the design of software that involves some form of AI. The Act has no concept of AI, but of Automated Decision System, defined as follows. Section 2 (2): “The term “automated decision system”…

Does the EU AI Act apply to most, if not all software? It is probably not what was intended, but it may well be the case. The EU AI Act, here, applies to “artificial intelligence systems” (AI system), and defines AI systems as follows: ‘artificial intelligence system’ (AI system) means software that is developed with…

In a previous note, here, I wrote that one of the requirements for Generative AI products/services in China is that if it uses data that contains personal information, the consent of the holder of the personal information needs to be obtained. It seems self-evident that this needs to be a requirement. It is also not…

IP compliance requirements on generative AI reduce the readily and cheaply available amount of training data, with a few consequences on how product development and product operations are done.

If an AI is not predictable by design, then the purpose of governing it is to ensure that it gives the right answers (actions) most of the time, and that when it fails, the consequences are negligible, or that it can only fail on inconsequential questions, goals, or tasks.